Core Concept and the Problem It Aims to Solve

AlphaEvolve is a novel artificial intelligence system developed by Google DeepMind designed to automatically discover and optimize algorithms. Its core idea is to combine the creativity of large language models (LLMs) with an automated evaluation mechanism to continuously refine algorithmic solutions in an evolutionary loop.

AlphaEvolve is like an indefatigable algorithm engineer: it can autonomously write code, test results, optimize improvements, and gradually evolve superior algorithms. (Advanced perpetual-motion engine 牛马)

This system addresses the challenge of how AI can autonomously innovate algorithms.

Historically, human experts in mathematics and computer science have spent years—or required sudden insights—to discover new algorithms. AlphaEvolve aims for AI to undertake this challenge independently. Its underlying philosophy is inspired by Darwinian evolution: by “mutating” (generating a variety of candidate solutions) and “selecting” (retaining the superior ones), it gradually approaches the optimal solution.

This evolutionary optimization allows AlphaEvolve to explore solution paths in a vast search space that humans might never consider. Unlike traditional methods that require human-designed algorithms, AlphaEvolve relies on explicit, objective indicators—such as correctness and runtime efficiency—to automatically judge the quality of solutions.

As long as a problem can be quantitatively evaluated, AlphaEvolve can attempt improvements, opening the door to automated exploration in many complex domains.

AlphaEvolve follows the autonomous learning tradition of DeepMind’s “Alpha” series algorithms (such as AlphaGo and AlphaZero) but introduces innovations. While previous systems primarily employed reinforcement learning, AlphaEvolve abandons reinforcement learning in favor of a straightforward genetic algorithm strategy, rendering the system simpler and more generalizable across different problems.

Some experts have called it “the first case of a general-purpose LLM successfully generating entirely new scientific discoveries.” Ultimately, the concept behind AlphaEvolve is to endow AI with an “evolutionary innovation” capability, enabling machines to experiment and refine, just as human researchers do, to tackle long-standing challenges.

Operation Mechanism: How Does AlphaEvolve Work?

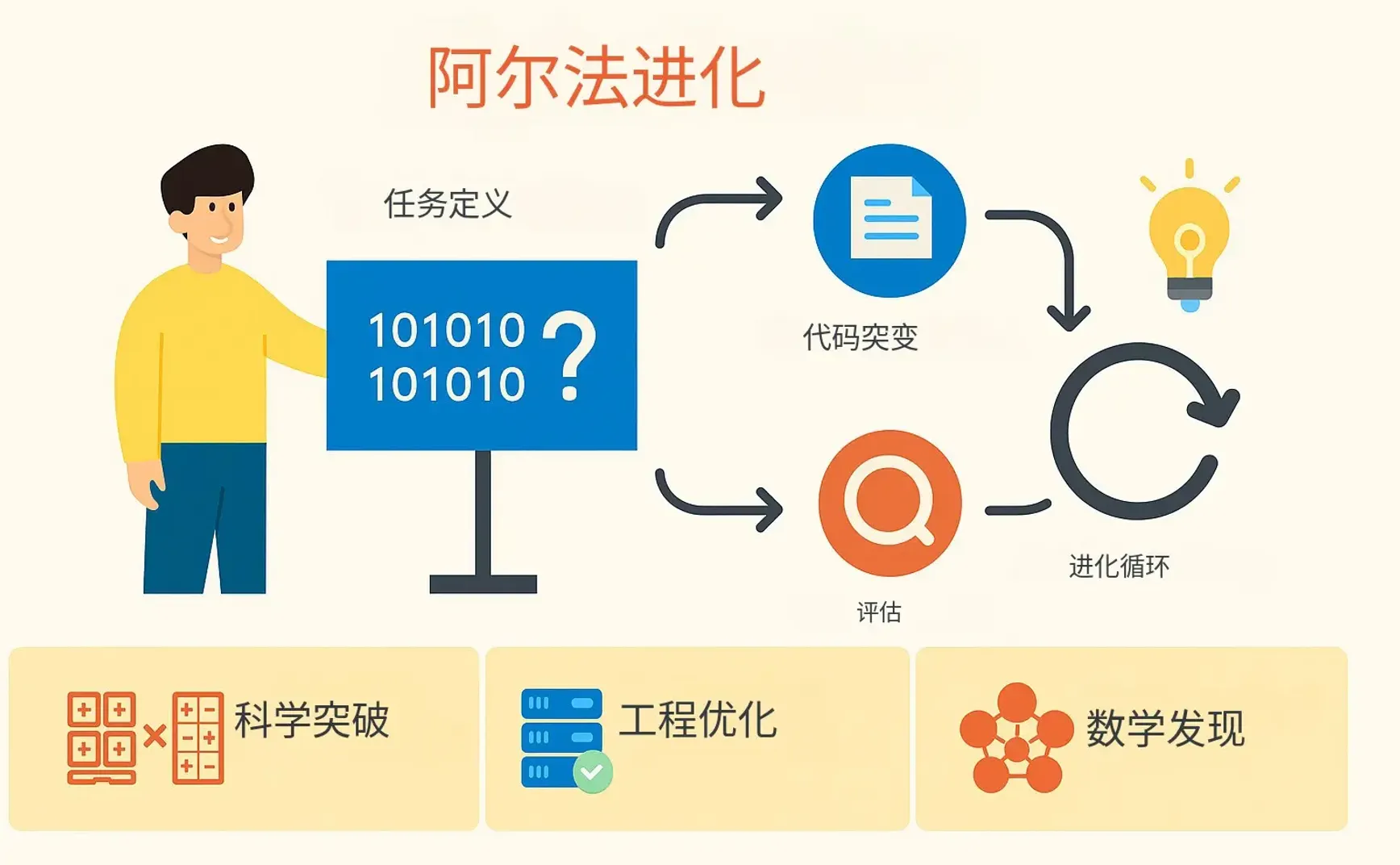

The operational process of AlphaEvolve can be aptly compared to an automated “algorithm factory” that undergoes cycles of proposal, testing, selection, and further proposal. Its overall architecture is illustrated below:

Diagram of AlphaEvolve's system architecture: Scientists/engineers provide an initial program and evaluation criteria. The system cooperates via the Prompt Sampler (prompt assembly module), a collection of LLM engines, a pool of Evaluators, and a Program Database to continuously improve the program in a genetic algorithm style, eventually producing the optimal solution. Below the architecture is pseudocode for the loop control process, illustrating mutation and selection from parent to offspring programs.

When using AlphaEvolve, human users (scientists or engineers) must first define the problem and provide: 1) an initial baseline program (even if it is naive and inefficient), and 2) evaluation code (capable of automatically verifying the solution's correctness and quantifying its performance).

This effectively sets the “environment” and “survival criteria” for evolution. Then, the large language model (AlphaEvolve uses Google’s own Gemini series models) acts as the “brain” to generate creative ideas for algorithmic improvements.

The core loop of AlphaEvolve operates as follows:

- First, the system samples existing solutions from the program database as “parent” candidates, possibly selecting high-quality code fragments as “inspiration.”

- Next, the Prompt Sampler integrates the parent solution and inspirational fragments to construct a prompt that describes the problem and avenues for improvement, sending it to the LLM model. Under the guidance of the prompt, the LLM (a combination of Gemini Flash and Gemini Pro) generates an improvement suggestion, typically presented as a “diff” of changes to the parent program.

- The system then applies this modification to the parent program to produce a new “offspring” program.

- The Evaluators automatically run the offspring program and compute its score (for example, the time and resources required to correctly solve the problem).

- Finally, the program database records this trial and its results. If the offspring outperforms previous versions, it may be chosen as the foundation for the next round of evolution.

Through repeated iterations of the above loop, AlphaEvolve implements an automatic “survival of the fittest” process. This distributed control loop continuously generates numerous proposals and selects the best ones, much like the trial and error process in biological evolution.

Of course, AlphaEvolve does not rely on blind brute force. It benefits from the knowledge and creativity of the LLM to intelligently generate promising variants, while the evaluators ensure that each improvement is objectively justified. For instance, when optimizing code, the Gemini Flash model quickly suggests a wide range of ideas to ensure breadth of exploration, and the Gemini Pro model generates more sophisticated improvements to ensure depth. The evaluation phase guarantees that only code that passes validation and delivers better performance propagates.

This human-machine hybrid evolutionary mechanism allows AlphaEvolve to combine the imaginative power of models with rigorous outcomes, completing in very short time what previously required repeated trial and error by human experts.

The entire process functions as an autonomous loop of algorithm refinement: “propose solution → test performance → let the better solution evolve to the next round” until no further improvement is found. It can be seen as a digital evolutionary factory that continuously eliminates inferior solutions and accumulates superior ones under clear evaluation standards, thereby automatically evolving high-quality solutions.

Achievements and Their Significance

Since its inception, AlphaEvolve has achieved remarkable results in fields including mathematics, engineering, and scientific research.

Data Center Scheduling Optimization (Engineering Application)

AlphaEvolve discovered a simple yet efficient scheduling heuristic for Google's internal cluster management system, Borg, enhancing server resource utilization.

This algorithm specifically targets the common “resource fragmentation” problem in data centers: when one type of resource (e.g., memory) on a machine is exhausted while others remain idle, how to schedule tasks to minimize wastage.

AlphaEvolve’s solution is currently deployed in Google data centers and continuously recovers an average of about 0.7% of global computing resources.

Although 0.7% may seem modest, in an infrastructure as large as Google’s, it represents a significant efficiency boost—as if adding extra servers out of thin air! More importantly, this algorithm is implemented in simple, human-readable code, making it easy for engineers to understand, debug, and deploy.

This demonstrates that AlphaEvolve not only produces highly effective solutions but also ensures that these solutions are interpretable and practical.

Chip Hardware Design Optimization (Engineering Application)

AlphaEvolve has shown potential in assisting hardware engineering. In one experiment, it provided improvement suggestions for a highly optimized arithmetic circuit within Google’s custom AI accelerator TPU.

AlphaEvolve generated a modified version of the corresponding Verilog hardware description code, eliminating unnecessary bits in the circuit and streamlining the implementation without altering functionality. This modification passed rigorous functional verification and has been integrated into the design of the next-generation TPU chip.

It is worth noting that hardware circuits, especially those that have been meticulously optimized by humans, are rarely modified lightly. Yet AlphaEvolve discovered an optimization space unnoticed by human engineers.

This achievement signifies that AI can propose improvements using the language familiar to hardware engineers (such as Verilog), promoting collaboration between AI and hardware designers, and accelerating future chip development.

Acceleration of AI Model Training (Software Engineering Application)

AlphaEvolve improved core code in the training of large AI models with impressive results. It discovered a smarter method to partition large matrix multiplications, speeding up a key computational kernel in the Gemini LLM architecture by 23%.

This optimization directly reduced the total training time of similarly scaled models by about 1%. In cutting-edge model training where immense computational resources are required, even a 1% speedup means significant savings in energy and time.

Previously, human experts might have spent weeks fine-tuning low-level code for such optimizations, while AlphaEvolve accomplished a similar task in just a few days of automated experimentation. This allowed researchers to redirect their time and energy toward higher-level innovation.

In addition to matrix operations, AlphaEvolve also found efficiency improvements in highly optimized GPU assembly code. For example, it fine-tuned the FlashAttention kernel implementation in Transformer models, achieving up to a 32.5% speedup—remarkable since low-level code is usually very hard to optimize further, yet the AI found a breakthrough!

AlphaEvolve not only enhanced the performance of AI systems but also changed the paradigm of optimization work—from manual trial-and-error to automated exploration and refinement by AI.

New Breakthrough in Matrix Multiplication Algorithms (Mathematics Domain)

In fundamental mathematical computations, AlphaEvolve achieved a historic breakthrough by breaking a 56-year record in matrix multiplication algorithms.

Matrix multiplication is a fundamental problem in computer science, and for a long time, researchers have sought faster multiplication algorithms. As early as 1969, Volker Strassen discovered a clever method to multiply 2×2 matrices using 7 multiplications instead of the conventional 8, initiating the pursuit of optimizing matrix multiplication.

However, for larger matrices such as 4×4, the Strassen algorithm had maintained the best record—requiring 49 scalar multiplications—for over half a century.

AlphaEvolve surpassed its predecessor system, AlphaTensor (which was specifically targeted at matrix multiplication), by using its evolutionary algorithm to devise a new method that multiplies two 4×4 complex matrices with only 48 scalar multiplications.

This is the first time either humans or AI have surpassed Strassen’s 1969 algorithm on this problem, solving a puzzle that had remained unsolved for decades. According to the report, AlphaEvolve improved world records for 14 different matrix multiplication-related algorithms, demonstrating the breadth of its algorithm discovery capabilities.

This breakthrough proves that AlphaEvolve is capable of original findings in the abstract realm of mathematics. The significance lies not only in faster matrix multiplication (which benefits scientific computing, graphics processing, and more) but also in demonstrating AI’s potential for pure mathematical innovation.

New Progress on the “Kissing Number” Problem (Mathematics Domain)

AlphaEvolve not only reproduces known results but also makes breakthroughs on frontier mathematical problems.

The famous “kissing number problem” asks for the maximum number of unit spheres that can simultaneously touch another unit sphere in high dimensions—a problem that has perplexed mathematicians for over 300 years. In many high dimensions, the exact kissing number remains unknown, with only upper or lower bounds established.

AlphaEvolve made progress on the 11-dimensional kissing number problem: it discovered a new spherical configuration in which a unit sphere can be touched by up to 593 other spheres of identical size, thereby raising the lower bound for the 11-dimensional kissing number to 593 (the previous record was 592).

While the improvement is by only one, in such a high-dimensional and complex problem, it represents a significant breakthrough, marking new progress in the field of mathematics. This again underscores AlphaEvolve’s prowess in combinatorial optimization and geometric problems.

According to reports, the research team tasked AlphaEvolve with tackling more than 50 open mathematical problems. In about 75% of the cases, it autonomously reproduced the current best-known results, and in 20% of the cases, it even found new solutions superior to those known.

This indicates that in many fields, AlphaEvolve already matches or even surpasses the exploratory capabilities of top human experts. Whether in theoretical significance or practical application, its new discoveries—from more efficient computational methods to optimization of resource usage and breaking new ground in pure mathematics—demonstrate the enormous potential of such an automated evolutionary AI system.

Figure: Two world records broken by AlphaEvolve. The left image shows the number of scalar multiplications required for 4×4 complex matrix multiplication, with AlphaEvolve reducing the best record since 1969 from 49 to 48 multiplications. The right image shows the known lower bound of the kissing number in 11 dimensions, which AlphaEvolve increased from 593 to 594 (contact count between unit spheres). Although the improvement is only by one unit, these problems had been stagnant for years, and AlphaEvolve’s results mark a small but significant step forward in the mathematical frontier.

Comparison with Other Automated Systems

As an automatically evolving system for general algorithm discovery, AlphaEvolve is distinctly different from other well-known automated AI systems. Here, we compare it with systems such as AutoML, OpenAI Codex, and AutoGPT.

Compared to AutoML (Automated Machine Learning)

AutoML refers to automated machine learning, primarily focused on automatically selecting or optimizing machine learning models and hyperparameters for a given data task.

For example, Google’s AutoML or academic projects like AutoML-Zero use evolutionary algorithms to design neural network architectures. Compared with AlphaEvolve, AutoML has a narrower application scope—it is generally limited to optimizing machine learning models (such as model architecture search or parameter tuning) and does not directly generate general algorithm code.

AlphaEvolve, on the other hand, is designed for any problem that can be described and evaluated through code, not confined to machine learning alone. Additionally, while AutoML is often a tool for human data scientists to accelerate model training, AlphaEvolve functions more like an autonomous researcher, capable of independently seeking entirely new algorithmic solutions.

In essence, AutoML optimizes existing “recipes” (models), whereas AlphaEvolve can create new recipes, even solving purely computational challenges beyond the scope of machine learning.

Both employ automated search and evaluation techniques, but AlphaEvolve excels in its breadth of problem space and the complexity of generated solutions.

Compared to OpenAI Codex

OpenAI Codex is a large language model used for code generation and programming assistance. It can automatically generate code based on natural language descriptions and is integrated into tools like GitHub Copilot and ChatGPT’s developer mode to aid programmers. However, Codex is typically used in a way where the human provides the requirements and the model outputs code in one go, with humans verifying and revising as needed.

AlphaEvolve differs fundamentally: it focuses on algorithm discovery and optimization, aiming to generate entirely new algorithms, whereas Codex is geared toward day-to-day programming tasks (such as implementing features, fixing bugs, and refactoring code). Furthermore, AlphaEvolve employs an iterative evolutionary process with automatic evaluation and feedback loops that accumulate improvements over time, while Codex usually delivers a one-off output that lacks a self-refining, trial-and-error mechanism (unless prompted repeatedly by the user). Lastly, AlphaEvolve is currently aimed primarily at researchers for solving academic and engineering problems, whereas Codex is targeted at a broad developer audience for industrial applications.

In short, AlphaEvolve is like an “algorithm inventor,” whereas Codex is more of a “coding assistant.” Both demonstrate the powerful code-generation capabilities of LLMs, but AlphaEvolve stands out for its autonomy and innovation—capable of exploring unexplored territories—while Codex fundamentally relies on human guidance to address known problems.

Compared to AutoGPT and Other Autonomous Agents

AutoGPT is an experimental open-source project that emerged in 2023, aiming to build an autonomous agent based on GPT-4.

Users simply specify a goal, and AutoGPT automatically decomposes tasks—utilizing tools (like web search)—and executes in iterative cycles until the goal is achieved. It represents early attempts at “driving GPT on its own,” as seen later in projects like BabyAGI.

These agent systems have similarities with AlphaEvolve in that they both attempt to run multiple loops autonomously without requiring human input at every step. However, the differences are significant: first, AlphaEvolve is limited to algorithmic problem spaces with clear evaluation criteria, whereas AutoGPT tackles open-ended goals specified by the user.

The latter often lacks a clear, objective scoring system—for example, “plan a trip for me” is complex and difficult to evaluate quantitatively, which can lead AutoGPT to dead ends or ineffective actions. In contrast, every step in AlphaEvolve has a precise score (correct/incorrect, faster/slower, etc.), ensuring reliable improvements.

Secondly, AutoGPT relies more on GPT-4’s reasoning abilities and real-time information gathering to complete tasks, but it is not specialized in designing new algorithms; essentially, it operates by recombining existing knowledge rather than proactively exploring innovative solutions. AlphaEvolve, by contrast, is explicitly aimed at generating new algorithmic code, using experimentation to acquire new insights—for example, finding a superior solution.

In terms of implementation, AutoGPT, as a general-purpose agent, focuses on integrating various tools (internet, file systems, etc.), whereas AlphaEvolve is a specialized platform for algorithm evolution that integrates multiple AI models and evaluation modules to optimize code. In other words, AutoGPT is a jack-of-all-trades that often skims the surface, whereas AlphaEvolve is deeply focused in one domain, striving for breakthroughs.

With its clear goal orientation, self-assessment capabilities, and evolutionary optimization strategy, AlphaEvolve shows unique advantages in tackling complex research problems—advantages that current autonomous agent AIs do not possess.

Future Directions and Impact

As a technology for “general algorithm invention,” the capabilities exhibited by AlphaEvolve are only just beginning to unfold, and its future is met with great anticipation.

Enhanced Capabilities Through Model Advances

AlphaEvolve relies on the code generation capabilities of large language models, so as the underlying LLM evolves (for example, with even more powerful next-generation Gemini models), its performance will rise in tandem. Future LLMs will be even better at understanding complex instructions and writing intricate code, meaning AlphaEvolve will be able to tackle even more complex algorithms across a wider range of areas.

It is foreseeable that AlphaEvolve will evolve alongside large models, continuously pushing the upper bound of solvable problems. Perhaps today it addresses problems spanning a few dozen lines of code; tomorrow it might tackle system-level algorithms involving thousands of lines.

Expansion into More Research Fields

Thus far, AlphaEvolve has demonstrated its prowess in mathematics and computer engineering. Its generality implies that any problem that can be formalized into an algorithm and its solution quantitatively evaluated can theoretically be attempted with AlphaEvolve.

In the future, we may see it branching into fields such as natural sciences and engineering design. For instance, it could be used to design new material structures in materials science, optimize synthetic pathways for new drug molecules in pharmaceuticals, or improve control algorithms in sustainable energy. While these problems are often complex and multi-staged, if corresponding simulation and evaluation functions (such as material performance simulation or drug activity predictions) can be established, AlphaEvolve might help uncover innovative solutions.

Of course, applying it in these fields also presents challenges, as evaluating the “goodness” of a solution might not be as straightforward as in a mathematical problem (possibly involving multi-objective trade-offs or lengthy evaluation processes). Nonetheless, AlphaEvolve can at least serve as an intelligent assistant for researchers, providing a wealth of preliminary ideas for human refinement, thereby accelerating research progress.

A New Paradigm for Human-Machine Collaboration

AlphaEvolve embodies a new model of collaborative innovation between AI and humans.

It does not completely replace humans—people are still responsible for posing the problem, setting evaluation criteria, and interpreting and validating the final outcomes. However, the heavy lifting of trial and error is handled by AI, greatly increasing efficiency. In the future, research and engineering teams may use systems like AlphaEvolve as everyday tools, brainstorming alongside human experts.

For example, in chip design, human engineers might conceptualize module functions while AlphaEvolve refines the implementation details; in mathematical research, mathematicians propose conjectures while AlphaEvolve attempts to construct proofs or counterexamples.

With such AI collaborators, humans can focus on high-level creativity and directional oversight, while AI handles the massive experimentation. This division of labor between humans and machines promises to significantly boost the speed and scope of innovation.

Acceleration and Democratization of Research and Engineering

The advent of AlphaEvolve marks the emergence of an automated innovation capability. Should this technology mature and be widely adopted, we could witness an era of accelerated research and engineering. Long-standing problems might be solved more quickly, and new algorithms will emerge in abundance, shortening the cycle of technological progress.

At the same time, this capability could lower the barriers to innovation—smaller teams or individual developers with good ideas could leverage AlphaEvolve to implement them without needing vast research resources. Of course, this demands that the associated tools are user-friendly.

The DeepMind team is already developing a user-friendly interface and plans to launch an early academic access program. It is easy to envision that as systems like AlphaEvolve mature, algorithm design—once a domain reserved for specialists—will become more accessible, allowing a broader range of people to harness AI to address challenges in their fields.

Potential Impact on Society

Looking further ahead, automated evolutionary AI like AlphaEvolve could have profound implications.

On one hand, it will significantly enrich human knowledge and technological capabilities. Previously, we relied on humans to invent algorithms one by one, but now AI might mass-produce solutions, addressing many long-tail problems and optimizations. This will boost efficiency across industries, reduce energy consumption, and create a more convenient technological environment.

On the other hand, it raises new responsibilities and ethical questions: as AI begins to create, how do we verify the reliability of its innovations? Can humans fully understand the complex solutions generated by AI? Is there risk in adopting AI-generated algorithms in critical areas? These issues require careful consideration even as we reap the benefits.

Overall, the picture painted by AlphaEvolve is positive—it shows the possibility of AI self-evolution and self-improvement, perhaps marking an important milestone in the evolution of artificial intelligence and hinting that AI will take a more proactive role in scientific innovation in the future.

How Will AlphaEvolve Impact Our Future?

AlphaEvolve is an AI capable of “evolving its programming.” It treats problems like a biological environment and algorithms as living entities, continually experimenting and selecting to “breed” better solutions. For the general reader, think of it as an indefatigable virtual research assistant: given a clear goal, it tirelessly generates a variety of proposals, automatically evaluates their merits, discards the bad, retains and improves the good, until a satisfactory answer is found.

AlphaEvolve is remarkable because it initiates a new mode of AI innovation.

In the past, AI primarily executed tasks given by humans, but now it is starting to figure out how to solve problems on its own. Essentially, AlphaEvolve represents a leap toward higher-level AI applications—not just solving problems, but discovering new solutions.

Some experts have described this achievement as “pretty astonishing,” calling it the first successful demonstration of a general-purpose large model driving new scientific discoveries. It has already proven that machines can achieve significant results in chip design and resource scheduling in engineering, as well as make headway at the forefront of mathematics by contributing incremental breakthroughs to puzzles that have persisted for centuries.

AlphaEvolve—and future automated evolutionary AIs—could profoundly impact our society.

On one hand, it has the potential to become an indispensable tool for researchers and engineers, accelerating the innovation process so humanity can more rapidly address major challenges in energy, medicine, technology, and beyond. On the other hand, the widespread adoption of such technology might reshape education and talent needs—the future may call for individuals skilled in collaborating with AI, making human-machine teams the norm.

AlphaEvolve represents a significant step forward as AI moves from “being able to do” to “being able to create.” It offers a glimpse into a future where AI acts as a collaborator or even a driver of innovation, helping to shape the technological landscape with us.

References:

- Google DeepMind Blog: “AlphaEvolve: A Gemini-powered coding agent for designing advanced algorithms”

- VentureBeat: “Meet AlphaEvolve, the Google AI that writes its own code — and just saved millions in computing costs”

- Nature: “DeepMind unveils ‘spectacular’ general-purpose science AI”

- IEEE Spectrum: “New AI Model Advances the ‘Kissing Problem’ and More”

- Open Source Community Article: “AlphaEvolve vs Codex: A Comparison of Technological Directions and Focus”

- Wikipedia: AutoGPT entry (an introduction to autonomous agents like AutoGPT)

- Wikipedia: OpenAI Codex entry (detailing Codex’s uses and features, as cited in comparison articles)

- Tech Blog: Overview of AutoML (explaining the definition and scope of automated machine learning)