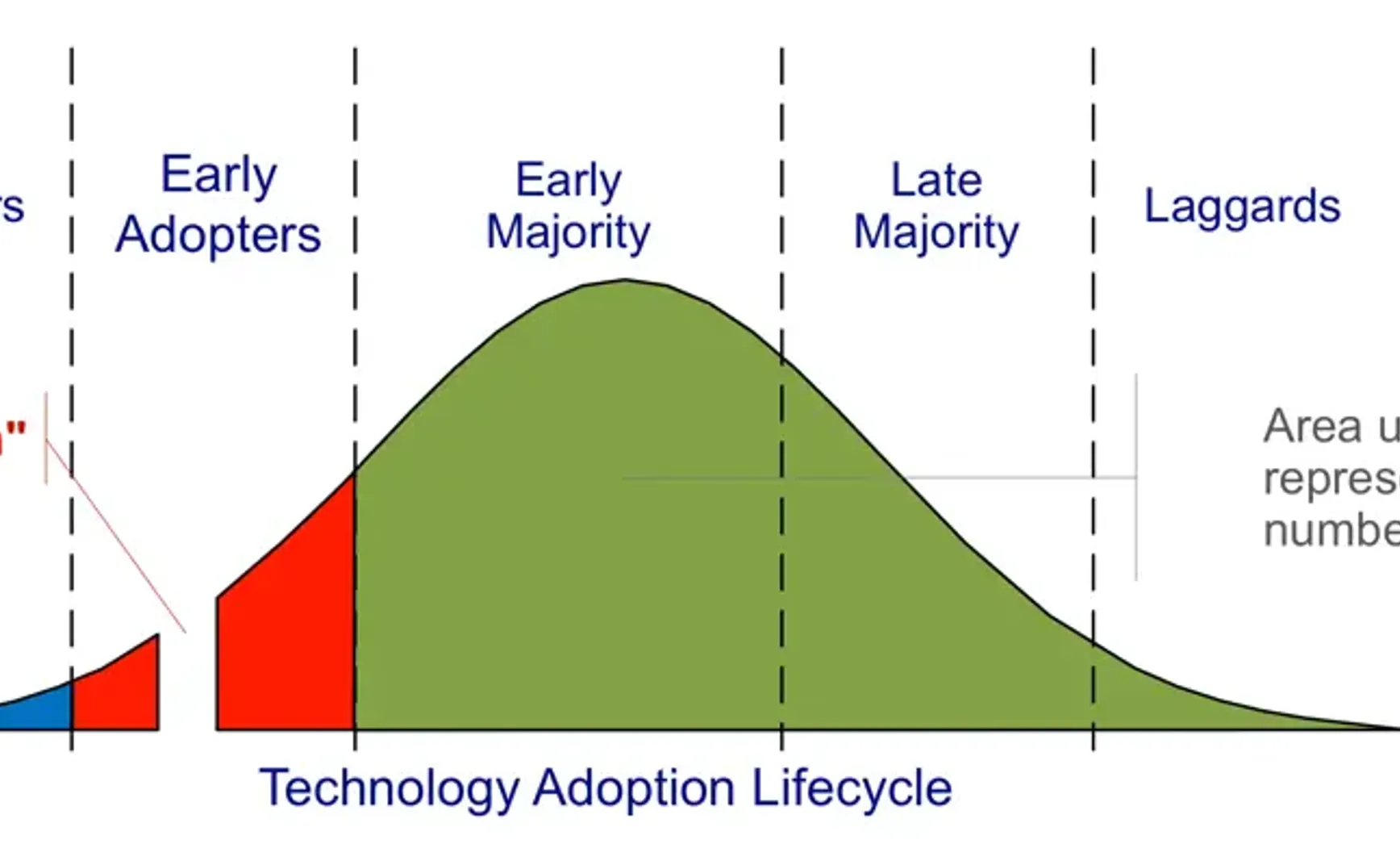

Technology Adoption Lifecycle Curve: The diagram above illustrates Geoffrey Moore’s Technology Adoption Lifecycle model, which divides users into five categories: innovators, early adopters, early majority, late majority, and laggards.

The red area marks a significant “gap” between early adopters and the early majority—commonly referred to as The Chasm. Many new technologies, after attracting geeks and tech enthusiasts, struggle to cross this chasm into the mass market. This pattern is particularly evident in today’s wave of AI products.

1. Why AI Breakthroughs Haven’t Reached the Masses

In recent years, from large language models generating fluent text to image-generation models producing photorealistic results, AI has seen a series of astonishing breakthroughs. Yet, from what I observe around me, many of these advancements have not “reached” ordinary people’s daily lives and instead remain within circles of early experimenters.

“ChatGPT monthly active users surpass 100 million,” “DeepSeek is a once-in-a-generation invention for China” … but in reality, very few people use AI as a daily tool. Many tried it once or twice when news coverage was overwhelming, driven by curiosity, but it never became part of everyday internet life.

Some reports note that as many as 30% of the UK population have never heard of ChatGPT, highlighting the gap between public awareness and industry hype.

This reflects the disconnect between technological capability and real-life relevance.

Many AI innovations focus on showcasing cutting-edge capabilities without refining scenarios that address pain points for everyday users. Early adopters—often developers and tech professionals—enjoy trying novel technologies, while the wider public cares more about whether a product can simplify their lives and solve real problems.

When AI products stay at the stage of flashy demos and abstract concepts, without becoming tangible, useful tools, most people naturally won’t buy in. Many current AI applications have yet to find a true connection with the needs of the mass market, making innovation at times feel like a creator’s self-indulgent celebration—a reason behind the awkward situation where critics praise but the market remains indifferent.

2. Why Flashiness Outweighs User Value

We often see this: as soon as a new AI model emerges, countless products announce that they have “integrated” it. Once there’s a cool new algorithm, teams rush to plug it in for attention and hype.

In doing so, they sometimes ignore what users actually need. “Using the biggest, newest model” becomes the goal—overlooking simpler, more effective ways to solve a problem.

A study on AI projects in industry found that overly tech-driven leadership is the leading cause of AI product failure. Executives often don’t know what problem AI is supposed to solve, or they apply the latest algorithms to scenarios where AI isn’t a good fit.

The result: massive effort chasing cutting-edge features that impress at first glance, only to find users unimpressed—perhaps because a few simple rules could have solved the problem even better.

This pattern may have multiple causes:

First, creator pride and excitement. Engineers and entrepreneurs often get absorbed in the beauty of technology itself, using technical showmanship to prove their capability and win applause with dazzling demos. Yet over-immersion in technical detail makes it easy to forget the product’s core mission—creating value for users.

Industry examples abound: teams building multiple large-model platforms, eager to pack in every new AI capability, churning out demos—but rarely digging into actual business scenarios to identify real value. The tech scene appears lively, but business engagement is shallow, and product definition alongside user pain points gets ignored.

As one Alibaba Cloud executive bluntly put it: many enterprises are “taking the platform around just to do demos," filling the company with self-congratulatory fascination for new technology. It’s like building a gorgeous sports car without knowing where to drive it.

Another factor is market and capital pressure. In an AI boom, startups aiming for funding and attention often exaggerate technical highlights, wrapping products in a “disruptive innovation” narrative.

Under this narrative, product design can drift—feature lists get longer while user experience refinement is neglected; marketing claims AI can do everything, but once users try it, usability falls far short of expectations.

When technical flashiness becomes the primary selling point, short-term buzz may rise, but long-term trust and loyalty erode.

3. The Narrative Illusion: Demo Obsession and Feature Bloat

There’s another notable AI trend: the narrative illusion—an obsession with demos and piling on features.

Launch events and media coverage often showcase stunning AI demos: models being witty, robots that sing and dance—making it seem like AI can do anything. But these demos are usually run in controlled settings, free from the complexity of real-world constraints.

Once outside the scripted demo, users often find performance drops sharply. The “cool demo” builds inflated expectations, making actual usability look disappointing by comparison.

Many insiders joke: “An AI demo that looks great doesn’t necessarily make a great product.”

A fixation on demos makes teams focus on creating “wow moments” without investing enough in consistent, reliable user experience. This strips away practicality: users’ expectations are raised but everyday use disappoints—eventually driving them away.

Feature bloat is another trap. Faced with AI’s possibilities, teams want to cram everything in: chatbots, image generation, voice assistants—

The feature list keeps growing, as if “more” means “better.” But blindly piling on features erodes focus and identity.

When AI capabilities are easily plugged into products, many teams fall into this stacking frenzy, forgetting that true value leaps come from shifts in understanding, not just the sum of features. Complex systems born of feature overload may not appeal to users—too many modes and options raise the entry barrier, leaving everyday users lost.

The result: AI products that feel like flashy buffets, while users never experience a single breakthrough benefit.

The psychology behind demo obsession and feature stacking stems from fascination with AI’s potential, combined with the urge to “prove we’ve done a lot.”

But in product philosophy, great products rarely succeed by doing everything—they win by doing one thing to perfection. When creators fall in love with their own narrative and detach from user reality, it becomes an illusion.

I’ve had similar experiences during my internships—initially wanting to cover every detail in every task so everyone could see my value, but ending up with outputs that diluted their own impact. Much like the “less is more” principle: keep only what serves the goal, rather than adding for the sake of flashiness.

No matter how dazzling the story, if it doesn’t translate into tangible, visible value for users, it’s nothing more than a mirage.

4. A More Human-Centered Product Path

Ultimately, technology must serve people, and the supreme test of any product is its impact on human well-being. A human-centered path means putting people at the core throughout innovation—advancing technology to augment human capabilities and experiences, not to showcase technical ego.

People first, technology for good.

Research from Accenture shows that sustainable development depends on placing human needs, dignity, and agency at the center. This is a shift from instrumental rationality back to human care: in designing AI products, the key questions aren’t “What can we do?” but “What do users truly need?” and “Will society be better as a whole because of it?”

This means emphasizing AI’s role in augmenting rather than replacing humans: instead of building AI systems that make people redundant, create assistants that help them work more efficiently and safely.

User co-creation and insight mechanisms.

Empathetic product paths emphasize deep co-creation with users—from early-stage needs definition, to mid-stage prototype testing, to late-stage iteration. Real target users should be invited to give feedback and share pain points.

This not only calibrates product direction but also respects user agency—transforming the product into a collaborative outcome rooted in real-life insight, not a unilateral creation by elite technologists.

Through co-creation, teams can truly “see the mechanisms”: uncover the deeper reasons behind user experiences, and identify the real barriers to integrating technology into daily life. This requires product makers to keep a “rational yet passionate” mindset: scientifically diagnosing problems while warmly understanding human thoughts and feelings.

Long-term value over short-term gimmicks.

A humanist approach also demands long-termism: moving deliberately and rightly, even if it’s slower.

When others pile on features, perhaps we focus on perfecting one core function; when competitors chase attention-grabbing launches, we might quietly refine behind-the-scenes details that users genuinely feel.

Short term, this might not be as glamorous as hype—but long term, it builds user trust, reputation, and emotional connection. Once formed, this bond is more defensible than any technological barrier. Technology will always evolve, but the products that touch people’s hearts endure.

5. The Value and Meaning of the “Minimum Useful Win”

Deliver the smallest possible feature that provides a clear, tangible win for the user. —Minimum Useful Win

Unlike the traditional MVP, which focuses on feasibility, the “win” here emphasizes user usefulness and value delivered. Before pursuing grand ambitions, teams should ask: is there a small, well-defined scenario we can quickly build that truly solves a user pain point?

If yes, focus on that, and secure this smallest but precious win.

The “Minimum Useful Win” is about validating value.

When a team quickly delivers an independent, business-relevant feature into users’ hands, it’s like lighting the first lamp—proof to both users and the market that the product is on the right track, and that AI isn’t just a showpiece but solves real problems.

For example, in an AI productivity product, instead of launching ten barely usable features at once, focus on perfecting “smart summarization” so users can truly feel: “Wow, AI just summarized my meeting notes in 5 seconds—this is great!”

Such a moment builds user confidence and lays the groundwork for expanding features.

The Minimum Useful Win also helps avoid overdesign and maintain core value focus.

Overengineering is a common AI development trap: pursuing technical perfection often adds flashy but peripheral features. The MUW principle reminds us to stick to the “small but essential” rule—anything not directly delivering user value can wait.

A summary of AI practice in enterprise contexts emphasizes identifying AI’s core solvable pain points (efficiency gains, risk reduction) instead of compiling features “for AI’s sake.” This aligns with finding the minimum sellable feature—a self-contained, value-generating unit that can be shipped fast.

By stacking these small wins, products can cross the chasm step by step, instead of leaping for it and falling short.

From a broader product philosophy perspective, the MUW embodies a pragmatic yet ambitious mindset.

It doesn’t mean stopping at minor improvements—rather, it stresses keeping user value at the center, even if the radius is small, and drawing a complete circle around it. Every iteration where the user experiences real value strengthens their connection to the product, like securing a new base camp on the climb to the summit.

These incremental victories compound: motivating the team with positive feedback while building user loyalty through continuous benefit. At a time when many AI products are bloated yet directionless, these “small but satisfying” wins often form the bedrock of a product’s lasting success.

We’re living in an exciting yet somewhat restless era.

On one hand, the pace of technological progress dazzles. On the other, truly warm, sticky products are rare.

After the party ends, only the long-term value created solidly for users will remain to benefit the public.