When our school groups begin exploring project themes, we usually open an online whiteboard. When particularly active teammates contribute, the board quickly fills with ideas, though only a handful may ultimately be usable.

Only recently did I read a study that made me realize the problem might not lie in the team’s energy but in our misunderstanding of “creation.” Creativity isn’t about dumping ideas all at once; it’s about letting them collide like dominoes—bouncing off one another, passing the momentum, and continuously growing.

The literature calls this process “idea linking,” which I refer to as “Connecting Ideas.” It’s like placing a paper boat in a river: the first current merely pushes it gently, while every subsequent twist and confluence brings surprises. Perhaps truly efficient creation isn’t the loud, chaotic “brainstorm” but a dedicated pursuit of clues—first, letting curiosity create a gap, then allowing ideas to “interlock,” following a winding path that leads to new territories.

Connecting Ideas

The title of the article is Driven by Specific Curiosity, where "specific curiosity" refers to a desire to solve a particular puzzle or problem. “Connecting Ideas” means using early ideas as input for later ones—one idea becomes the stepping stone for another.

Since early ideas serve as a springboard for later ones, you shouldn’t rush to dismiss them—they are the starting point of exploration. As depicted above, for each preceding idea, you modify it and actively extract its beneficial elements, then derive the next idea.

For example, in our engineering psychology class when we had to design a product prototype, I initially thought about “developing a job search app.” I then questioned what the pain points were in the job hunting process. Could a personality test be added? How could the test data be used to match suitable jobs? Each new question made the idea more concrete and in-depth. Eventually, I moved beyond a simple “job platform” concept and designed an intelligent career matching system based on personal characteristics.

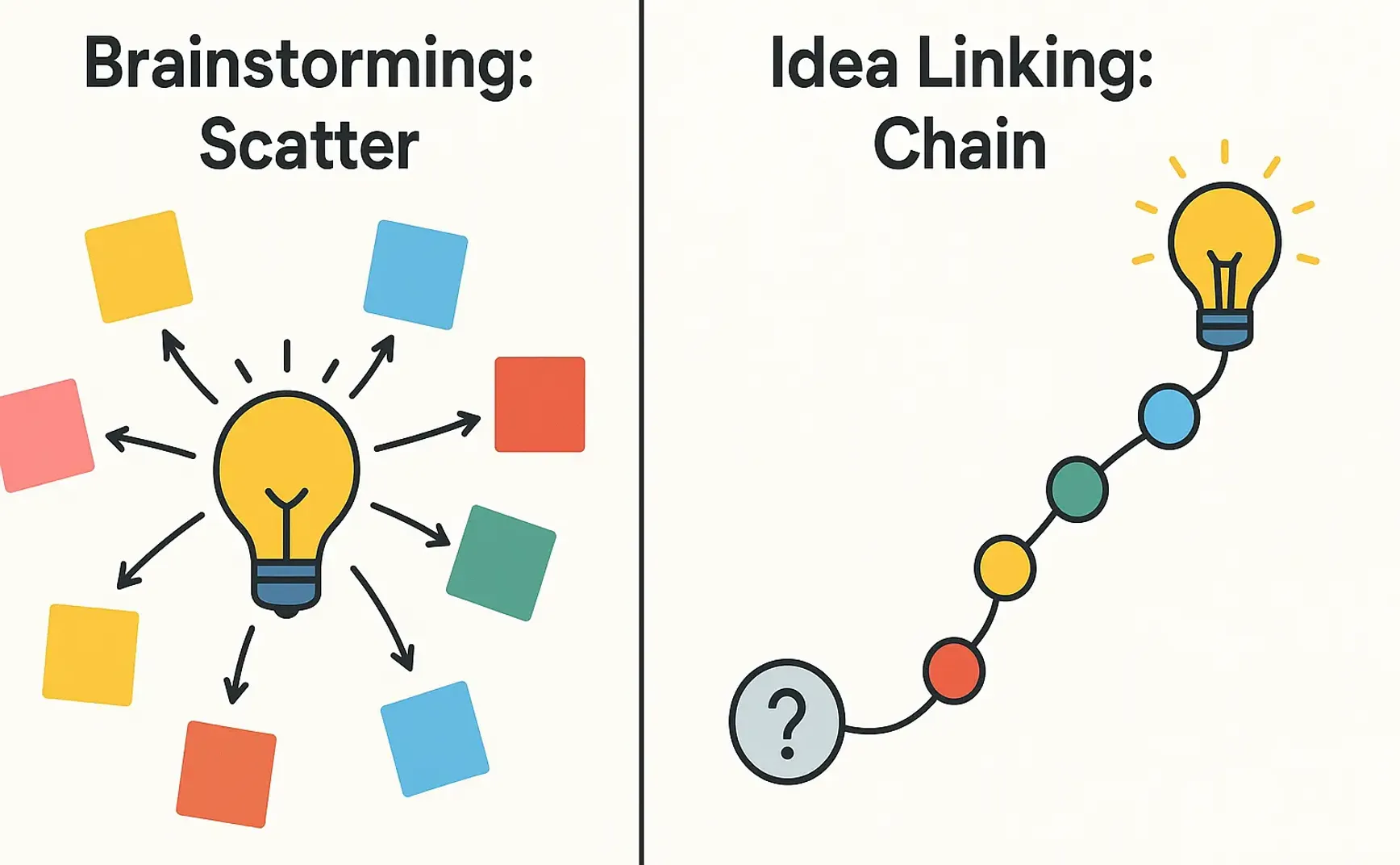

To compare “brainstorming” and “Connecting Ideas”: In a group discussion, brainstorming involves everyone thinking and voicing their ideas simultaneously. After a while, you end up with a whiteboard full of colorful, independent ideas. In contrast, “Connecting Ideas” starts with an initial point and continuously evolves it. Each step is an extension and modification of the previous one.

In form, brainstorming aims to generate as many ideas as possible in one burst, while “Connecting Ideas” forms a chain of nodes, preserving traces of every previous step. The former starts with a theme and diffuses outward indiscriminately, whereas the latter begins with a clear but unsolved puzzle—a specific gap—around which ideas spiral upward. As a result, brainstorming leaves you with a basket of isolated nodes, while “Connecting Ideas” provides an “evolutionary genealogy” that clearly shows the transformation and selection process.

Process-wise, brainstorming is an explosive, simultaneous process, fueled by an uninhibited call to share whatever comes to mind. “Connecting Ideas,” on the other hand, builds gradually, requiring your next thought to incorporate an element of the previous one—this is a fundamental difference in their methods of inspiration.

Typically, when we generate ideas through brainstorming, the best ideas are selected after the session is over. In contrast, with Connecting Ideas, every step undergoes optimization and iterative validation, naturally embodying a cycle of “demonstration–trial and error–iteration.”

In terms of form, I’m reminded of depth-first versus breadth-first algorithms. Brainstorming is like a breadth-first search: starting with a root idea, it spreads layer by layer, exploring all options at the same depth. “Connecting Ideas” leans toward depth-first; it picks the most promising branch and follows it down to its conclusion, with each step inheriting information from the previous one. This results in a causal chain that is easy to trace and reuse.

How “Connecting Ideas” Enhances Creativity

I prefer to view “Connecting Ideas” as an adjustment to one’s creative rhythm. It transforms the process from a one-time explosive output into a pattern of “build up, release, and build up” like a measured breath.

As the title suggests, specific curiosity has a certain stickiness. When you hold a concrete, unsolved gap in your mind, your brain’s reward system continuously secretes dopamine, urging you to complete the missing puzzle piece.

So, is specific curiosity better than general curiosity?

General curiosity broadens your horizons, while specific curiosity forces you to notice the nuanced details. The former is like inhaling fresh oxygen; the latter is like exhaling, delivering oxygen into your capillaries. General curiosity creates information gaps that are wide and shallow—you know you don’t know so many things that it’s difficult to focus on one area. In contrast, specific curiosity creates a narrow, precise gap—“How did this playing card disappear?” or “Why does the conversion rate drop 15% exactly at step 2?”—making the pull and tension much greater. Loewenstein’s information gap theory suggests that only when the unknown is confined by clear boundaries does the brain form a sustained motivational loop (a dopamine cascade).

Amabile and Getzels’s early work shows that moderate constraints compel people to seek unconventional paths. General curiosity, with its lack of constraints, encourages jumping from topic to topic, making it hard to accumulate comparable elements within the same semantic space.

Specific curiosity, on the other hand, provides a kind of “shackle”—you must revolve around the puzzle. Early ideas naturally share core elements, and these common elements become the key nodes necessary for idea linking. The chain advances precisely because elements from the previous node must be used or transformed in the next.

While writing this article, I aimed to emphasize the power of the latter. In an age of information overload and cloud-based AI tools, standing out often isn’t about knowing more but about holding onto a question more tightly and for longer.

Of course, the most authoritative evidence comes from experiments—specifically Experiment 4 in the study. Researchers manipulated and measured two types of curiosity in contexts like magic puzzles, diary tracking, and chain training. Although scores on the general curiosity scale were positively correlated with information-seeking behavior, they did not significantly boost creative evaluations (both by expert review and non-routine scoring). This is because, as outlined above, general curiosity primarily increases external “material stock,” without an intrinsic mechanism that compresses this material into an evolvable sequence. Specific curiosity, however, simultaneously drives material input while maintaining a consistent semantic thread—thus forming a “qualitative change” in a short time.

“Connecting Ideas” can also reduce noise in working memory. In a brainstorming session, it’s like having dozens of browser tabs open—teammates’ ideas flood in, and you worry that your own ideas will get drowned out, forcing you to try to keep all their ideas in your working memory temporarily.

That temporary space in the brain is limited—according to Baddeley’s model, only a few “slots” are available—so it quickly gets overloaded with patchwork information. Psychologists call this “interference load”: each new input competes with existing content for encoding channels, raising noise levels, and the crucial clues that need deep processing might be missed.

“Connecting Ideas” quiets this crowded space through a simple two-step “throttling–noise filtering” mechanism.

The first step is throttling: the rule that your next thought must incorporate a core element of the previous one drastically reduces the number of candidates entering working memory. Instead of juggling a dozen independent ideas, you maintain only one active chain, while previous nodes can be externalized—written down, pinned on a whiteboard, or stored in notes—transferring them to long-term or external memory and freeing up those limited slots.

The second step is noise filtering: because the successive chain makes all new information share a “bloodline” and high semantic overlap, working memory holds a set of closely related fragments rather than disjointed pieces. Cognitive psychology shows that homogeneous information is more easily chunked and operated on by the central executive system.

In essence, the brain can treat an entire chain segment as a single composite unit, significantly reducing the cost of executive oversight and updating. With the cognitive load reduced, the brain has spare capacity for distant associations, hypothesis testing, and error monitoring—these are the higher-order functions essential to creative breakthroughs.

Compared to brainstorming, which treats “deviation” as divergence, this approach turns deviation into a progressive step. In traditional creative processes, we are often hampered by the goal of finding an entirely different idea—too far a jump may miss the mark, too slight feels mundane. Connecting Ideas uses a “retain and transform” micro-step that ensures every deviation is traceable; it’s akin to incremental weight updates in neural networks: rather than completely overhauling the original model, you gradually adjust parameters that incur high loss until a new valley of potential emerges.

Thus, it inherently provides a reviewable track record that not only facilitates reuse by others but also allows the creator to return to earlier nodes and graft parallel branches. Connecting Ideas is not just a fancy process—it weaves together motivational calibration, cognitive rhythm, memory management, and evolutionary logic into a single cord that, though slender, can propel thinking further and deeper.

Project Practice

Recently, in an engineering psychology group project, we designed a prototype for a car HUD (Head-Up Display) that “interacts with people safely without distraction.” Throughout the process, I decided to apply the Connecting Ideas method to drive the entire conceptual process.

The class assignment was broad—any “driving-human factors” topic was acceptable. Based on the methodology, I first narrowed my curiosity by deliberately creating a small gap. I searched online for phenomena encountered during driving and selected one: why do drivers often miss the “left turn” instruction on voice navigation while driving at high speeds?

This question encompassed safety risks, cognitive overload, and attentional mismatches—factors discussed in our course—so I used it as the entry point.

My first instinct was to synchronize the voice prompt with a pop-up on the screen that highlights a left-turn arrow. Building on that, I wanted to preserve the concept of “simultaneous presentation” but also wondered if visual elements might cause drivers to look down, thus necessitating a “vision-friendly, non-distracting visualization.”

Then I thought of projecting the arrow onto the windshield via the HUD. This, however, raised a new issue: what happens in conditions of high brightness or reflective weather at night? A bright HUD might prove dazzling. Here, I chose to retain the HUD’s head-up advantage and address the dazzling issue in subsequent refinements.

For the brightness issue, we considered a brightness-adaptive method by integrating a light sensor for dynamic adjustment. This, however, introduced another problem: sensor response delays, and in extreme lighting transitions, lag might occur—especially given high driving speeds. Moreover, pure brightness adjustment might not suffice to differentiate between a left turn and going straight.

So, while maintaining the head-up and adaptive features, we modified the arrow into a simple flow bar: going straight is represented as a fading blue horizontal bar, left turns display a curved bar with green flashing dots, and right turns are a mirrored version, all against a low-contrast background. This triple-code—via color, shape, and position—greatly reduces the time needed for instant recognition.

In summary, the process was as follows: start with an initial idea, extract a useful element from it, then iterate by improving areas that need enhancement—step by step, the idea evolves.

The key tips are:

- Lock in the puzzle within one minute of exploration: the smaller, the better—ideally stated as “Why does X lead to Y?”

- For each step, note “what have I retained?”—don’t simply negate or replace, but transform the previous node using a “+ / –” approach.

- Externalize old nodes: whether using sticky notes, Miro, or Git version control, ensuring that working memory only holds the current chain’s head.

- Keep the chain length ≤5: if after five steps you still can’t find a resolution, branch out in the middle to start parallel tracks; maintain a depth-first approach.

Reflecting on AI Collaboration

In the AI era, our workflows inevitably incorporate some AI collaboration. Thus, the final section focuses on the “human-AI collaboration paradigm.”

In the context of Connecting Ideas, the most intuitive role for an AI model is as a recorder of the chain’s evolution. From the very first thought onward, it encodes each inference and rejection into a temporal sequence. You can discuss the process with the AI, then have it summarize afterward. Alternatively, you might use a speech-to-text app to convert your dialogue into text for the AI to summarize. You can ask it for suggestions and interact further.

This approach might view AI as a “librarian,” yet it also has the potential to be a “cognitive tension amplifier.”

Each evolutionary step is linked to the previous one, so discussing with the AI can help you stay on course and avoid drifting too far from your original motivation. Conversely, as the chain reaches its fifth or sixth node and momentum wanes, the AI can introduce a slight disruption—simulating counterexamples or setting extreme scenarios—like tossing a small stone into calm water, creating ripples that subtly bend the original path, reopening the gap and triggering another round of dopamine release.

I recall during the HUD prototype project we reached the sixth node in our idea chain. While the flow bar’s multi-level encoding seemed complete, the team suddenly lost enthusiasm: although test data was impressive, no one pressed on with the next step.

So, I passed the conversation to GPT, asking it to recount the chain. It acted as a calm narrator, stringing together the retained factors, transformed factors, and remaining gaps from each step into a timeline. In the end, it posed a question: “The current design assumes that the driver always sits in an ideal position; what happens if the driver slightly turns their head to check the rearview mirror—can the HUD remain readable?”

Thus, we pivoted to consider the “head-turning perspective” as an extreme scenario. At that moment, the chain tightened—the seventh node naturally became “overlay a tracking micro-arrow shadow on the flow bar, lit only when the driver’s view shifts.”

There's also a more experimental form of collaboration I call “stream relay.” I liken this human-AI co-creation rhythm to a river: turbulent in the upstream where the first leaf drifts by, then, midstream, dark eddies, curved banks, and boulders force the leaf to change course, and finally, downstream, where tributaries converge to form a wide, fertile delta.

The essence of Connecting Ideas is facilitating creativity along a “narrowing yet repeatedly torn information gap,” always maintaining one active node while externalizing and archiving other nodes to preserve focus and tension. Ensuring the chain continues while being suitably perturbed requires a sufficiently “two-dimensional” stage—one where the main line and its branches coexist visually—and a partner who can appropriately toss in that “contrarian pebble” into the calm waters.

Flowith naturally possesses these qualities: its canvas view breaks down dialogues into nodes and connections, bending the timeline into a spatial network.

In most linear chat interfaces, ideas once scrolled out of view sink to the bottom of the “river” unless you search keywords or engage a large language model with RAG; it’s hard to recapture them. Flowith’s node-based design pins each evolutionary step on the canvas like buoys marking the main stream, tributaries, and backflows.

Flowith turns every output from a person or model into an independent node, inherently offering a traceable “evolutionary genealogy.” We can always trace back to ancestor nodes or graft parallel branches without having to maintain the version tree in our heads.

Moreover, when interacting with traditional large language model software (like ChatGPT), if you ask a misphrased question or receive an unsatisfactory answer, you have to rephrase and ask again. Personally, I worry that by not modifying and directly asking, I might leave the previous erroneous context intact, adversely affecting the output. So I end up rephrasing, effectively overwriting the earlier, flawed line of thought.

Yet sometimes our ideas aren’t absolutely right or wrong. With AI, comparing answers is necessary. Using a node-based board like Flowith for communication allows us not only to retain multiple lines of inquiry and responses for comparison but also to iterate towards the optimal solution.

This approach preserves not only the process of idea evolution but also the record of failed ideas, which can later be used for review or summaries.

Conclusion

At the heart of all these collaborative methods is rhythm and space for reflection.

In an era of information overload and ubiquitous tools, our biggest mistake is treating models as mere productivity multipliers—demanding tens of thousands of words at once—and forgetting that genuine creativity requires a measured, breath-by-breath process.

Creativity isn’t achieved by shooting in all directions—it comes from continuous, problem-driven probing.

Of course, human-AI collaboration doesn’t mean offloading all problems to AI. If we haven’t formed a clear, specific puzzle ourselves, large language models will only provide conventional answers. Only by engaging in a sequence of linked inquiries and feeding back into the AI can we approach the idea linking described in the research.

Asking questions touches on prompt engineering, but that doesn’t mean writing an excessively long prompt. Rather than one lengthy prompt, it’s better to have a series of iterative prompts—each building on the highlights of the previous round and challenging an unresolved sub-problem.

In the past, a trivia fact or a fleeting idea might not immediately take off; now with AI rapidly prototyping, scripting, and literature searching, the cost of validation has drastically decreased. Organizations can set up an OKR submetric like “One Why per Day” and use an AI dashboard to archive the day’s questions, prototypes, and subsequent outcomes, forming an internal knowledge base.

The scarcity in creativity has shifted from “not enough information” to “insufficient focus and linked ideas.” Transforming “curiosity–stepping stone–more curiosity” into a visible, traceable, and trainable process is key to upgrading our work paradigms.

AI is an amplifier, but only if humans first ignite that spark of “wanting to know what’s really going on” and learn to connect it into a string of light.