Artificial intelligence agents are fueling an explosion in specialized professional agents across nearly every industry. Each agent is custom-designed to handle specific repetitive or complex tasks.

From streamlining healthcare operations to optimizing retail pricing and automating recruitment processes, these agents promise to significantly increase efficiency and capability. Agents developed by various companies, built on different frameworks and focused on distinct business areas, have so far operated independently.

However, in real life, challenges rarely fall neatly within a single application or domain. Imagine how much better it would be if these agents could communicate with one another and collaborate on our behalf.

Google’s newly released Agent2Agent (A2A) protocol is designed to make that vision a reality, ushering in a new era of “agent interoperability.”

This open protocol enables different AI agents to communicate, share information, and coordinate actions across various applications and organizations—even when developed by different vendors. In doing so, AI systems can work seamlessly together to resolve complex tasks.

What Is the A2A Protocol?

Agent2Agent (A2A) is a standardized method for one agent to converse with another—exchanging tasks, responses, and data—regardless of who developed the agent or on which framework it runs.

Just as Internet protocols (like HTTP) enable any web browser and server to interact, A2A provides a universal language for AI agents, allowing them to collaborate irrespective of their underlying frameworks or vendors. Its goal is to break down the “siloed” nature of AI agents and unlock the potential of multi-agent collaboration.

Today, companies may deploy several specialized agents (for IT support, scheduling, analytics, customer service, etc.) that often come from different vendors. Without a standard, these agents cannot work together directly, limiting their overall effectiveness.

A2A is designed to enable agents to interoperate across different systems, allowing them to assemble temporary teams to handle larger tasks. This means that if Company A’s agent and Company B’s agent both use A2A language, Company A’s agent can safely request assistance from Company B’s agent. By enabling such cross-dialogue, companies can greatly boost productivity from AI while avoiding vendor lock-in.

Google believes that by coordinating agents across the entire enterprise stack, A2A will “boost autonomy, multiply productivity, and lower long-term costs.”

Some key features and principles of A2A include:

- Embracing the “agent” capability: Unlike viewing AI services merely as APIs or tools, A2A treats each participant as a true agent. This means every agent uses its own reasoning and context to process requests, rather than functioning as a simple endpoint.

- Google emphasizes that they have enabled “true multi-agent scenarios without reducing agents to mere ‘tools’.” In other words, a remote agent isn’t just a function call—it is an autonomous AI with which you collaborate.

- Openness and interoperability: A2A is publicly released and vendor-neutral. It leverages familiar Internet standards—using HTTP for communication, JSON for data storage, and JSON-RPC (remote procedure call) for structured interactions.

- By using standard network protocols, it is easier to integrate into existing IT infrastructures. Any developer or company can implement A2A in their agent systems, building a broad ecosystem.

- Security by default: Targeted at enterprise environments, A2A includes robust security and authentication measures. It supports enterprise-level authentication mechanisms comparable to those used in the OpenAPI specification.

- This ensures that when one agent calls another, it can verify identity and permissions. Sensitive data remains protected as enterprises trust that only authorized agents can collaborate.

- Flexible task handling (short-term or long-term): Agents using A2A can cooperate on tasks that are completed immediately or require extended processing. The protocol is designed to gracefully handle long-running tasks—even those lasting hours or days, possibly with human involvement.

- During an extended process (such as multi-day data analysis), agents can exchange real-time updates, notifications, and status changes with each other and with users. Compared to a simple request–response API, this represents a big upgrade enabling sustained collaboration.

- Multi-modal communication: A2A is not limited to plain text; agents can exchange images, audio, video, or other rich media if needed.

- For example, one agent might generate a chart or video and send it via A2A to another agent or user interface. The protocol also supports streaming data (using Server-Sent Events, SSE) for real-time audio/video or token-by-token text streams. This makes agent interactions more dynamic and “human-like” in the way they share information.

At its core, the A2A protocol provides a universal “language” for AI agents to hold conversations, coordinate, and collaborate. Google states, “The A2A protocol will let AI agents communicate with each other, securely exchange information, and coordinate actions across a variety of enterprise platforms or applications.”

By open-sourcing A2A and rallying over 50 industry partners from day one—including Atlassian, Box, Salesforce, SAP, and many others—Google is pushing for it to become a universal standard.

In their vision, future AI agents—regardless of how they are built—will be able to “seamlessly collaborate to solve complex challenges.”

How Does A2A Work?

At a high level, A2A defines a structured dialogue between a client agent and a remote agent.

The client agent is the one initiating the request—typically an agent that directly serves users or coordinates workflows. The remote agent is the assistant or expert that the client agent calls upon to execute subtasks or provide information.

Capability Discovery: Agents advertise their capabilities using “agent cards” (metadata in JSON format). Before collaborating, the client agent can review another agent’s card to understand its abilities and decide if the agent is suitable for a specific task. For instance, an agent’s card might read “I can retrieve corporate sales data” or “I can generate marketing copy.” This allows an agent to dynamically find the best partner for a given task.

Task Request: The client agent formulates a task—a description of what needs to be done—and sends it to the remote agent using the A2A protocol. A task is a standard data structure (object) defined by A2A. It can include details such as the objective, input data, and parameters. For example, “Search for candidates for a software engineer position in New York with Python experience” could be a task.

Agent Collaboration and Messaging: Once the task is shared, the two agents engage in a back-and-forth dialogue (if needed) to complete the task. They exchange messages containing context, intermediate results, clarifying questions, or any other information relevant to the task. A2A does not enforce a rigid format for the conversation—agents can communicate naturally and flexibly (potentially using natural language instructions supplemented with structured data when necessary). This might involve the remote agent asking for additional details or the client agent providing context needed by the remote agent.

User Experience Negotiation: Interestingly, as agents exchange information, they also negotiate the output format and how it will appear to users. Each message in A2A can include one or more “parts,” each carrying a specific content type (text, image, video, form, etc.). This allows agents to explicitly discuss how results should be presented. For instance, if a remote agent has the ability to return a chart or interactive map, the client agent can indicate whether the user interface supports it (perhaps a static image is preferable on a device that cannot display interactivity). This user experience negotiation ensures that task results are delivered in the format best suited to the end user's environment.

Task Lifecycle and Completion: A task has a lifecycle—it can be completed in a single step or remain “in progress” until the remote agent finishes the work. For quick tasks, the remote agent might directly send back the final result. For longer tasks, the remote agent might issue progress updates (e.g., “20% complete…”), and the client agent can relay these updates to the user in real time. Once complete, the remote agent returns the final artifact—the output of the task. The artifact could be data, a document, an image, or anything the task generated. The client agent then uses this artifact to fulfill the user's request (for example, displaying a list of candidate profiles).

Secure Collaboration: Throughout the exchange, A2A handles the underlying security—ensuring each request is authenticated and authorized. Agents use tokens or credentials so that a remote agent accepts tasks only from trusted client agents, similar to API keys or OAuth tokens. All communication occurs over secure channels (HTTPS) and, being open, the protocol is subject to audit. Essentially, A2A is designed to let enterprises trust agents to work together without exposing sensitive data to the wrong parties.

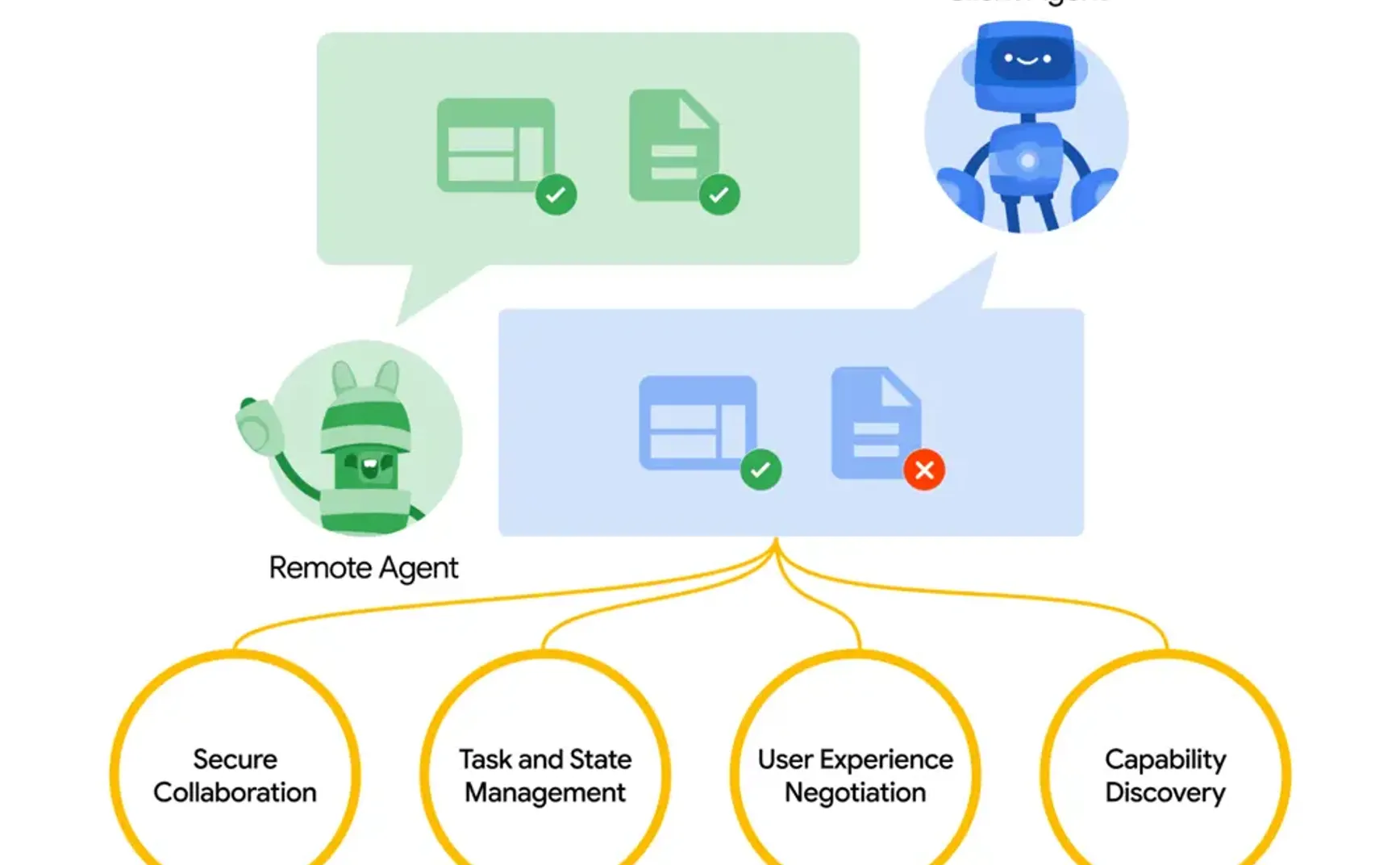

The image above shows how two AI agents communicate via the A2A protocol. The client agent (blue) delegates a task to the remote agent (green).

They exchange information (speech bubbles) to collaborate on the task, with the remote agent returning an artifact and status updates (in the image, the green check marks indicate completed items, and the red X indicates missing items).

The yellow circle highlights key components of A2A: secure collaboration, task and status management (tracking the task lifecycle and status), user experience negotiation (agreeing on result format/UI), and capability discovery (advertising and discovering what agents can do).

Essentially, A2A turns agents into team members. A client agent can discover capable peer agents, delegate subtasks to them, and then coordinate until the task is complete, all while keeping the user in the loop. The best part is that neither agent needs to share all of its internal data or memory—they simply communicate via the A2A interface, preserving privacy and modularity.

It’s much like one department within a company sending a request to another: each has its own tools and data, but they collaborate by exchanging only the necessary information in a standardized format.

Google has published the complete draft specification of the A2A protocol on GitHub, detailing all information types and fields. Even without delving into these details, A2A provides a robust, flexible framework for multi-agent communication: capability discovery, task-based structured exchanges, continuous updates, and support for rich content—with built-in security.

Real-World Example: Hiring Candidates

Google provides a real-world example involving candidate recruitment. This scenario illustrates multiple AI agents using A2A to streamline the process of recruiting a software engineer. You don’t have to be a technical expert to follow—imagine each agent as a professional colleague with a specialized role and A2A as the language they use to coordinate.

- Assigning a Task to the Primary Agent: A hiring manager interacts with a primary AI assistant (referred to as the “HR agent”) through a unified interface—such as Google’s demo chat application called Agentspace. The manager instructs the agent, “Find qualified candidates for our software engineer opening in New York who have Python experience.” The HR agent interprets this as a task to be completed.

- Finding a Specialist Agent: The HR agent might not have direct access to all candidate databases. It uses A2A’s capability discovery feature to locate a specialized “recruiting agent.” The recruiting agent’s profile (agent card) advertises that it can search recruitment sites or resume databases. The HR agent decides this is a good match and establishes a connection via A2A.

- Delegating the Candidate Search: Through A2A, the HR agent sends the candidate search task to the recruiting agent, including details such as the job description, location, and required skills (like Python). The recruiting agent then begins its work—querying LinkedIn, internal talent pools, or online résumés (using its own tools or protocols like MCP to access data).

- Receiving Candidate Information: Within a short time, the recruiting agent finds, say, five promising candidates and compiles their profiles. It returns these results as an artifact via A2A—a structured list of candidate information. The two agents may have negotiated on a format (possibly a nicely formatted table or cards with names and summaries). The HR agent then displays these candidate suggestions to the hiring manager. The manager doesn’t need to know about the recruiting agent—only that their HR assistant has magically fetched the relevant candidates.

- Scheduling Interviews: Next, the hiring manager selects a few candidates and says, “Please schedule interviews with these candidates.” The HR agent now faces a new task: arranging the interviews. It uses A2A to connect with a “scheduling agent.” This agent may integrate with calendars (Google Calendar, Outlook, etc.) and coordinate availability. The HR agent passes on candidate contacts and preferred times. Through A2A, the HR and scheduling agents may exchange further details—for instance, the scheduling agent might ask whether the interviews should be remote or in-person (user experience negotiation) or provide an update (“Interview for XXX confirmed for March 10 at 2 PM”). Eventually, the scheduling agent books the meeting and returns a confirmation. The hiring manager receives a notification that interviews are set, without having to manually coordinate the schedule.

- Conducting Background Checks: After interviews, another agent is brought into the process. The hiring manager requests background checks on the final candidate. The HR agent uses A2A to enlist a “background check agent.” This agent specializes in verifying work history, education, and conducting any necessary checks. The HR agent delegates the candidate information as a task to the background check agent, which conducts its work (possibly taking a day or two and involving external databases) and sends back a report. Meanwhile, A2A allows for status updates—for example, “background check 50% complete.” Once the report is received, the hiring process moves toward final decision-making.

- Seamless Collaboration: Throughout the process, multiple AI agents work together behind the scenes, each performing its role. The A2A protocol enables seamless collaboration: the HR agent doesn’t need to know how the other agents work, only what requests to make and how to speak their language. Each runs in its own environment (the recruiting agent accesses job databases, the scheduling agent taps into calendar APIs, etc.), but A2A acts as a bridge, enabling coordinated work. To the human user (the hiring manager), it feels like dealing with a super-intelligent assistant that handles the entire complex workflow with minimal supervision.

As Anthropic engineer Barry Zhang noted during the AI Engineer workshop on “How to Build Effective Agents,” one key point is: Don’t build agents for everything. Each agent doesn’t need to be a jack-of-all-trades; it only needs to handle its designated scenario.

Instead of relying on one all-encompassing AI (which might fail), you have a team of specialized AIs handling different tasks and passing work between each other.

The hiring manager’s productivity improves dramatically: what might have taken a recruitment team days or weeks (sourcing candidates, scheduling interviews, conducting background checks) is largely handled automatically by collaborating agents. These agents might even come from different vendors—for example, the recruiting agent could be a third-party HR software service while the scheduling agent integrates with Microsoft’s calendar system. As long as they use A2A, the HR agent (perhaps running on Google’s platform) can work with them seamlessly.

This cross-vendor interoperability is precisely the aim of A2A: giving users the freedom to choose the best AI for each task and enabling them to work together.

Google’s demo scenario is just one example. One can envision many other cases: an IT support agent delegating network issues to a specialized server agent or a personal assistant agent handing off travel booking to a dedicated travel agent, and so on.

When agents can chain together their strengths, the possibilities are endless.

A2A vs. MCP vs. Function Calling

Google’s A2A and Anthropic’s MCP are complementary protocols rather than direct competitors.

A2A is about agents conversing with one another to collaborate on tasks, while MCP connects agents to data sources and tools, providing them with context.

One might say MCP addresses the problem: “How does an AI assistant get the information it needs?” whereas A2A answers: “How do multiple AI assistants coordinate their actions?”

MCP provides a universal, open standard for connecting AI systems to data sources—replacing disparate integrations with a single protocol.

In practice, MCP allows AI models (like Anthropic’s Claude or even OpenAI’s ChatGPT) to securely and standardly retrieve external information or trigger actions. For example, an MCP integration might enable an AI to query a corporate database, retrieve a document from Google Drive, or send a message on Slack—all via a common protocol. Developers can set up MCP servers to expose certain data or services, and AI agents (MCP clients) can invoke these servers as needed.

Anthropic likens MCP to a “USB-C port” for AI—providing a universal plug for data and tools.

MCP equips AI with context and auxiliary tools, rather than facilitating conversations between autonomous agents. For instance, if an AI agent requires customer data from a CRM, MCP can provide that data. However, MCP doesn’t define how one autonomous agent should seek help from another for reasoning-related tasks.

This is where A2A comes into play.

Google makes it clear: “A2A is an open protocol designed to complement Anthropic’s Model Context Protocol (MCP), which provides useful tools and context for agents.” An agent might use MCP to acquire the necessary data and then use A2A to collaborate with another agent to act on that data.

In conceptual terms, MCP connects an agent to data and tools, whereas A2A connects an agent to other agents.

Typically, MCP is used in single-agent scenarios (AI plus a data source), while A2A focuses on multi-agent scenarios (AI plus AI). For instance, using MCP, an AI might pull a document from Confluence; with A2A, the same AI could ask a document analysis agent to summarize that document. They address different pieces of the puzzle and can, in fact, be used together.

An online comment nicely captured this point: A2A and MCP together could form the backbone of the emerging “Internet of Agents”—a network where agents freely share context (via MCP) and capabilities (via A2A) to accomplish work.

OpenAI’s function calling takes a different approach to extending AI model capabilities.

In OpenAI’s scheme, developers can define functions (with names and parameter schemas) and pass these definitions to the AI model (GPT-4 or GPT-3.5). The model can then decide during the conversation to “call” one of those functions by outputting a JSON object that conforms to the function schema. An external caller (outside the model) detects this, invokes the function, and returns the result to the model so that the conversation can continue.

The idea is to enable ChatGPT to interact with external tools and APIs in a controlled manner. For example, if you ask ChatGPT, “What’s the weather in Chicago?”, it might call a function like get_weather(location) to retrieve the answer, then reply accordingly.

Function calling effectively converts natural language queries into structured API calls. The model is trained to recognize when a function (such as a database query) should be used and to output precisely formatted JSON for it. This is a powerful technique for generating more accurate, actionable results, as the model delegates certain tasks to real-world functions (for example, mathematical calculations or database queries).

However, function calling is not a dialogue between two autonomous agents—it is more like an AI agent using a tool. The function being called is not an autonomous AI with its own intelligence; it’s simply code that performs a specific task.

In the function calling model, the AI remains in control, deciding if and when to use a function. By contrast, in the A2A world, remote agents have their own “brains.” They can even turn the conversation around to ask the client agent for additional context or handle multi-step processes internally. A2A is more symmetric and interactive.

For example, with function calling, ChatGPT might invoke schedule_meeting(person, time) to book a meeting—the function directly integrates with a calendar system and returns success or failure. With A2A, an agent would contact a scheduling agent and engage in dialogue (which might include negotiating alternate times if conflicts arise).

Function calling is a one-shot solution, whereas A2A facilitates ongoing, rich communication.

Additionally, A2A is designed to handle long-running tasks and streaming results—issues that function calling does not directly address. If a function call takes a long time, the model waits for the result; with A2A, an agent can initiate a task and then keep in sync with another agent through progress updates. A2A also introduces the concept of negotiating the format in which results are returned (for instance, the format or pattern), whereas function calling does not require format negotiation because the output schema is predefined.

In summary, function calling provides tools (functions) for AIs to use when necessary, while A2A creates a collaborative environment where AI colleagues work together. Function calling is typically a single round of call and response (one model to one function), whereas A2A supports multi-turn conversations between two intelligent agents.

Both approaches can be used to achieve complex results, but A2A is more flexible for scenarios requiring two AI systems to collaboratively reason or handle parts of a problem. In fact, one can imagine an agent that uses function calling as part of its toolkit and then connects with another agent via A2A—the layers can be stacked rather than being mutually exclusive.

Google’s AI Strategy Through A2A

Google’s launch of the Agent2Agent protocol is a strategic move that offers insight into the evolution of enterprise AI and Google’s vision for the future of AI systems.

Google did not create A2A in a vacuum—it emerged from observing how companies attempt to deploy AI at scale.

Many companies have been experimenting with “agent-based AI,” but one major challenge has been getting agents built by different teams or vendors to work together. Google notes that it leveraged its internal expertise in scaling agent systems and recognized interoperability as a key issue in deploying large-scale, multi-agent solutions for customers.

By introducing A2A, Google directly addresses this pain point. They offer customers a standardized way to manage numerous AI agents across “different platforms and cloud environments.”

Fundamentally, Google sees that without a protocol like A2A, the value of AI could be limited by isolated deployments—and they are acting to remove that barrier.

From the beginning, Google has positioned A2A as an open protocol with broad partner support. Over 50 technology companies and service providers (including Salesforce, SAP, Intuit, Accenture, and others) have been announced as contributors or supporters. This positions A2A as an industry standard rather than a proprietary Google API.

Strategically, this approach is similar to Google’s past initiatives (for example, open-sourcing Kubernetes for container orchestration). By being the first to launch an open standard, Google can influence the direction of the industry, ensure smooth integration with its own platforms (such as Vertex AI on Google Cloud), and avoid the perception of vendor lock-in.

It’s a “rising tide” approach—if A2A is widely adopted, Google’s services will stand out in a multi-agent world, and everyone will benefit from interoperability.

By releasing A2A via Google Cloud, it is clearly integrated with Google’s cloud strategy. Google aims to be the preferred provider for enterprises building and running AI agents. Not only does Google supply AI models, it also provides the pipelines and “glue” (such as A2A alongside tools like the Agent Development Kit and Agent Orchestration in Vertex AI) to deliver a full-stack solution for enterprise AI.

They believe, “We have the models and the platform (Vertex AI), and now with an interoperability standard (A2A), everything can connect together.” For companies needing various AI and software systems to cooperate, this comprehensive approach could be very attractive.

Google’s investment in an agent collaboration protocol indicates its anticipation that AI will evolve from a single monolithic model to a network of specialized agents.

This is a strategic vision worth noting. In recent years, the AI surge has concentrated on building larger, more capable individual models, but Google is recognizing that the next practical leap in AI performance may come from coordination. By launching A2A, Google is betting that future AI practice will involve the coordination of multiple agents rather than relying solely on one “super brain.”

It reflects a mindset that the next breakthrough in AI utility might come from combining and interconnecting systems—much like early Internet companies benefited from setting standards (think Google’s focus on web standards and Android’s openness).

It is also a defensive move: by creating an open standard, Google can preempt other companies from controlling the multi-agent interoperability layer.

For Google’s research direction, A2A hints that future work will explore multi-agent systems, communication, and coordination. We may see research on how agents negotiate, plan collaboratively, and maintain consistency during interactions.

It connects AI with distributed systems and even aspects of human–machine interaction (since agent collaboration often involves intermediate feedback to humans). Google’s introduction of A2A signals a desire to accelerate innovation in this area and set the agenda for how AI agents collaborate.

Advantages and Potential of A2A

At a high level, A2A provides a plug-and-play ecosystem for AI agents.

Companies can integrate the best agents from various vendors and have them work together. This flexibility means you are not locked into a single vendor’s solution—your Salesforce AI can converse with your Google AI, which in turn can interact with your internal AI, and so on.

This interoperability is vital to fully harnessing AI’s potential, as no single system can possess all the data or functionality needed in every situation.

A2A allows each agent to focus on what it does best, and then combine those results.

This specialization can yield better outcomes. Rather than trying to build one all-powerful agent, you can have a financial agent, a legal agent, a marketing agent—each an expert in its domain—and when they work together via A2A, they can handle a complex project collectively. As seen in the recruitment scenario, this can greatly streamline workflows.

It’s akin to the way human teams outperform individuals when tackling complex tasks. Agents can delegate subtasks to those with the right expertise, resulting in higher-quality outputs and faster completion times. Google and its partners suggest that A2A could enable richer, large-scale delegation and collaboration in this way.

By chaining tasks between agents, A2A can drive further automation.

Agents can autonomously trigger subsequent actions by calling on other agents. This means end-to-end processes can be handled with minimal human intervention. To a user, it feels like having an army of assistants coordinating on your behalf. You might tell your AI assistant, “Take care of my travel plans,” and it would silently activate agents to book flights, arrange hotels, rent cars, and so forth.

Users do not need to manually coordinate the separate steps. This dramatically boosts efficiency, achieving outcomes that would be beyond the capacity of any single agent.

Thanks to A2A’s support for long-term interactions and streaming, agents can handle continuous or open-ended tasks and adapt as situations change. For example, an agent managing a supply chain could stay in touch via A2A and adjust orders and logistics in real time if delays or shortages are detected. This dynamic feedback loop allows AI systems to be more responsive and robust when executing critical tasks.

It isn’t a “fire-and-forget” approach; the agents remain in sync throughout the task lifecycle.

The user experience negotiation feature means that outputs from multi-agent collaboration can be presented in the most effective way to users. Agents can provide results not just as plain text but also as rich media, interactive elements, or any format that the user interface supports.

This makes AI assistance more intuitive and visually engaging.

Imagine one agent generating a data visualization while another ensures it’s properly formatted for a dashboard—the result is a polished, digestible answer. Essentially, A2A helps ensure that when agents collaborate, the final product is cohesive and meets user needs.

Being open-source and welcoming contributions, A2A can also stimulate a community of innovation. Anyone can build an agent that complies with the A2A standard and immediately work with thousands of other agents.

This opens the door to an “app store” or ecosystem of agents, where new specialized agents can emerge and be rapidly adopted. Google’s collaboration with many partners indicates that we will see a variety of agents and integrations built around the A2A standard. It’s reminiscent of how standardized internet protocols spurred an explosion in online services. Here, a standard for AI agents might lead to explosive growth in interoperable AI services that work together to solve problems. This could accelerate AI adoption, as companies can mix and match solutions without worrying about vendor incompatibility.

In short, A2A has the potential to usher in a new era of agent interoperability that fosters innovation and yields more powerful, versatile agent systems.

For enterprises, having a standard communication protocol for agents also means better oversight. A2A allows for more consolidated tracking of requests and results exchanged between agents, rather than each agent operating as a black box. This can simplify compliance and auditing for enterprise AI decision-making. Google specifically notes that enterprises will benefit from a standardized approach to managing different agents—likely due to its integration with existing management tools and security controls.

Simply put, A2A could make unified application management easier (for example, logging all cross-agent interactions or enforcing that certain data never leaves specific agents). It envisions a future where AI isn’t a single super-brain, but a network of brains working together.

Just as human organizations scale through specialization and communication, AI systems might scale by uniting the intelligence of many agents. Google’s A2A is a significant step in that direction.

Limitations and Challenges

Despite its promising prospects, A2A is not without obstacles. The solution is currently in draft form. Its benefits rely on network effects—its true potential will only be realized when many agents implement it. Although Google currently has many partners on board, widespread industry adoption of A2A will take time.

In the short term, we may see fragmented situations: some companies adopting this protocol while others use different approaches, potentially leading to competing standards, or simply not everyone agreeing on the method.

Google’s challenge will be to drive adoption and work with a standards body to formalize it. Until the protocol becomes ubiquitous, developers may face a bricolage of integration methods.

Using a standardized protocol means every agent interaction has to go through a defined interface—typically over HTTP/JSON. This compatibility is beneficial, but compared to tightly integrated systems, it may introduce performance overhead.

Calling a remote agent via A2A might be slower than calling a local function (due to network latency, JSON serialization/deserialization). If an agent needs to make dozens of sub-calls, these delays can add up. There is also development overhead: agents have to be programmed to handle the A2A protocol and all its message types, which is more work than a one-off integration.

Over time, SDKs and libraries will mitigate this, but initially, enabling A2A support will require effort.

Designing multi-agent collaboration systems is inherently more complex than single-agent solutions. Developers must consider how to assign tasks to the best agent, handle non-responsive agents or mid-task failures, and determine what to do if two agents get stuck in a loop or disagree.

These issues are akin to distributed computing challenges and require robust coordination logic. Google is providing tools (such as the Agent Development Kit and Agent Orchestration in Vertex AI) to help manage these concerns, but for many, this remains a new paradigm. Debugging interactions across agents could be tricky—if a problem arises, you must trace conversations across multiple systems.

There are many potential failure points in a multi-agent workflow. Networks can fail, an agent might misinterpret a task, or an agent might produce an invalid artifact. The system needs to be resilient—possibly through retries, fallback agents, or human intervention. This adds design overhead.

For example, if an agent fails to complete a long-running task, the initiating agent must decide whether to wait longer or take alternative steps. The effectiveness of these protocols depends on how they handle such failure modes. It remains to be seen how A2A will deal with all edge cases in practice. Early adopters will likely discover areas in the specification that need clarification or improvement.

Many of these challenges are similar to those in other fields (such as microservices, distributed computing, B2B APIs), so there is prior experience to draw upon. With time, Google’s involvement and the open community can address these issues. However, in the short term, anyone implementing A2A should proceed with caution, thorough testing, and careful monitoring.

Future Trends

Google’s Agent2Agent protocol is part of a broader trend in AI—from isolated intelligence to connected intelligence.

Just as the Internet connected computers around the world, we might soon see a network of AI agents that can discover and interact with each other in real time. Protocols like A2A and MCP lay the groundwork for what some call the “Internet of Agents.”

In this vision, whenever an agent needs a specific capability (be it data access or expertise), it can consult an agent directory much like devices look up services online. They then connect via standardized protocols to form temporary alliances to solve problems. It sounds futuristic, but the building blocks are gradually being assembled.

If this technology comes to fruition, AI agents may eventually form a complex intelligent supply chain, mutually accessing information and taking action on a global scale.

With major players like Google and Anthropic driving open protocols, we are likely to see a convergence of standards. There may be efforts to ensure smooth cooperation between A2A and MCP (which are inherently complementary), possibly even a unified framework or reference implementation. An industry consortium or working group might emerge to manage these agent interaction standards—similar to how Internet standards are governed.

If other tech giants (Microsoft, Amazon, etc.) join in, they might propose their own ideas or requirements, potentially broadening the scope of agent protocols.

Managing multi-agent systems could become its own category of software. We are already seeing early signs in platforms like Google’s Vertex AI Agent Engine and the Agent Development Kit.

In the future, there might be dedicated “agent orchestration platforms” for deploying, monitoring, and optimizing fleets of agents within enterprises. These platforms would operate behind the scenes using protocols like A2A and provide dashboards to configure agent workflows, set policies (such as which agents can converse or execute particular tasks), and analyze performance.

Essentially, as multi-agent collaboration becomes more commonplace, tools to manage such collaboration will mature. This is similar to how microservices led to the rise of Kubernetes and service meshes; multi-agent systems may eventually require their own management layers.

As agents increasingly work together in the background, the way humans supervise or interact with these composite workflows will also evolve. We might see visual process maps or conversational interfaces that show users, “This is what your agents are doing right now.”

Perhaps a manager might even intervene: “Tell the recruiting agent to also filter for Python expertise”—effectively interacting not just with one AI, but with an AI team. New user experience paradigms may emerge for coordinating and overseeing agent teams. Google’s mention of unified interfaces such as Agentspace hints at an early vision for a single hub where users manage multi-agent collaboration.

Ultimately, the trajectory of AI in enterprises is evolving from a single monolithic model to a “division of labor plus interconnection” approach. The emergence of the A2A protocol signifies that agents can overcome vendor and tech stack boundaries to work together on elaborate, refined workflows. Coupled with other open standards (like MCP) and various function calling mechanisms, multi-agent collaboration can finally break free of the “information silos” that have hampered progress, delivering exponential boosts in efficiency and flexibility.

Of course, all of this is still in its early stages—ecosystem building, performance tuning, standardized governance, and strategies for managing and securing multi-agent systems remain pressing challenges. For this reason, the advent of A2A not only responds directly to current distributed intelligence needs but also portends a future where AI blossoms in the form of a networked “Internet of AI Agents.” As more vendors and developers join in, we can expect unified protocols and toolchains to mature, ultimately enabling an open, interconnected “AI Agent Internet” across industries.